常见原因及解决方法

1、未正确导入相关类:需要确保正确导入了org.apache.hadoop.mapreduce.Job类和org.apache.hadoop.mapreduce.Mapper类,如果导入的是org.apache.hadoop.mapred.Mapper,可能会导致错误。

2、Mapper类未继承正确的父类:自定义的Mapper类必须继承自org.apache.hadoop.mapreduce.Mapper,并且实现map方法。

3、未设置正确的Mapper类:在调用job.setMapperClass方法时,需要传入正确的Mapper类的.class对象,而不是其他错误的类或对象。

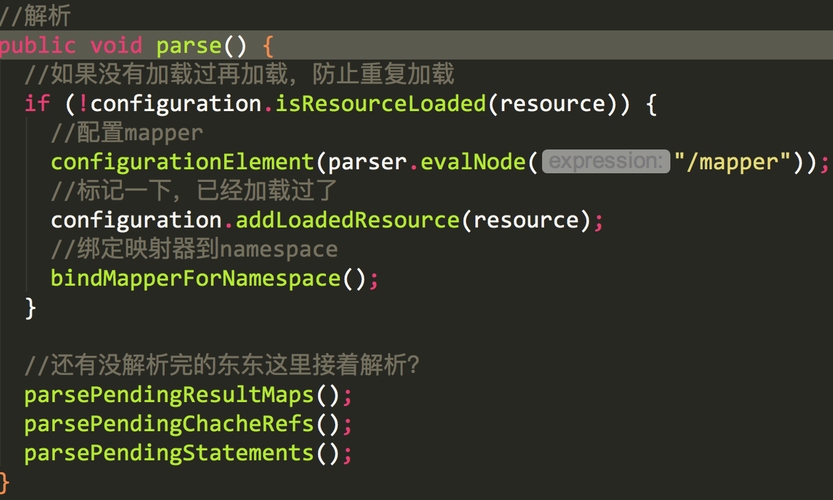

示例代码

以下是一个使用setMapperClass的正确示例:

- import org.apache.hadoop.conf.Configuration;

- import org.apache.hadoop.fs.Path;

- import org.apache.hadoop.io.IntWritable;

- import org.apache.hadoop.io.Text;

- import org.apache.hadoop.mapreduce.Job;

- import org.apache.hadoop.mapreduce.Mapper;

- import org.apache.hadoop.mapreduce.Reducer;

- import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

- import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

- public class WordCount {

- public static class TokenizerMapper extends Mapper<Object, Text, Text, IntWritable>{

- private final static IntWritable one = new IntWritable(1);

- private Text word = new Text();

- public void map(Object key, Text value, Context context) throws IOException, InterruptedException {

- StringTokenizer itr = new StringTokenizer(value.toString());

- while (itr.hasMoreTokens()) {

- word.set(itr.nextToken());

- context.write(word, one);

- }

- }

- }

- public static class IntSumReducer extends Reducer<Text, IntWritable, Text, IntWritable> {

- private IntWritable result = new IntWritable();

- public void reduce(Text key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException {

- int sum = 0;

- for (IntWritable val : values) {

- sum += val.get();

- }

- result.set(sum);

- context.write(key, result);

- }

- }

- public static void main(String[] args) throws Exception {

- Configuration conf = new Configuration();

- Job job = Job.getInstance(conf, "word count");

- job.setJarByClass(WordCount.class);

- job.setMapperClass(TokenizerMapper.class);

- job.setCombinerClass(IntSumReducer.class);

- job.setReducerClass(IntSumReducer.class);

- job.setOutputKeyClass(Text.class);

- job.setOutputValueClass(IntWritable.class);

- FileInputFormat.addInputPath(job, new Path(args[0]));

- FileOutputFormat.setOutputPath(job, new Path(args[1]));

- System.exit(job.waitForCompletion(true) ? 0 : 1);

- }

- }

FAQs

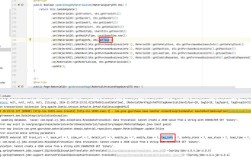

1、为什么会出现“The method setMapperClass(Class<? extends Mapper>) in the type Job is not applicable for the arguments”错误:这个错误通常是由于没有正确导入org.apache.hadoop.mapreduce.Mapper类,或者自定义的Mapper类没有继承自org.apache.hadoop.mapreduce.Mapper类导致的。

2、如何确保自定义的Mapper类能够被正确识别和使用:需要确保自定义的Mapper类位于正确的包中,并且在编译和运行时能够被找到,需要确保在调用job.setMapperClass方法时,传入的是Mapper类的.class对象,而不是其他错误的类或对象。